Generic request throttling is fine but how does my favorite server – SharePoint – do it? Well, the answer isn’t clear. This changes the trigger to its settings display, which allows you to limit the number of concurrent Flow instances – or the degree of parallelism. This can be done by going to the ellipsis and then Settings for the trigger. Luckily, Flow offers a technique for limiting the number of active flows at any time. The result is that every Flow gets throttled to death – until so few remain that they can be handled inside of the capacity of the server. Even if each Flow is told to retry later, even the server telling the consumer to retry later may cause the server to need to push off work even further. If you have 100 Flows all starting in a relatively short time talking to the same back end server, it may be getting thousands of requests from Flow every minute. With the scalability of the Flow platform, what’s to stop you from running hundreds or thousands of Flows at the same time – against the same poor server that’s just trying to cope? ParallelismĪny given Flow may not be making that many requests, but what happens when there are many Flow instances running at the same time? If you do work on a queue that information is dropped into, you can’t necessarily control how many items will come in at the same time. This typically happens when you have multiple flows running at the same time. Sometimes the response from the server – and the default exponential interval – will be too small, and you’ll exhaust the three retries and end up failing your request. Unfortunately, that’s not always the case. Sure, the server could make a mistake with its guidance once but surely not twice. In theory, at least, you’ll never hit a retry more than once or twice. What happens when there is a conflict between the provided retry schedule and what the server responded with as a part of its 429 response? The good news is that Flow will use the larger of the two numbers. The default is implemented as one try plus three retries.

So that seems like a good place to start. The default settings, however, are supposed to do exponential waiting on retries and four retries. The action’s space in the flow will change to the settings view, where you can explicitly set the retry policy to exponential – which will further change the view to provide spaces for a maximum retry count, an interval, minimum interval, and maximum interval.

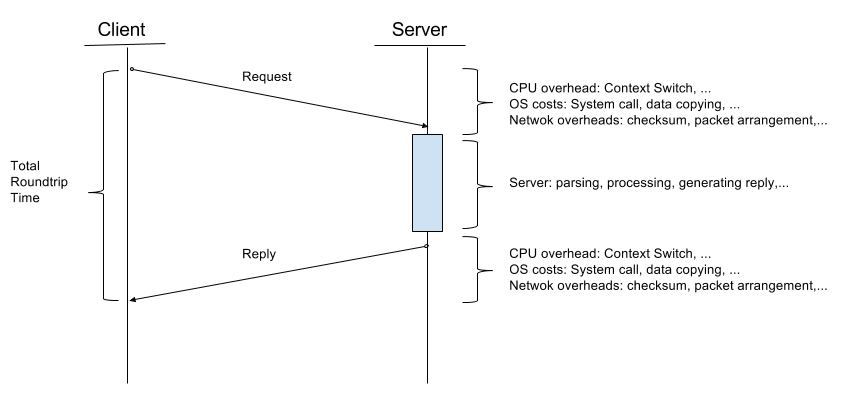

To do this, click the ellipsis at the right of the action and select Settings. Flow allows you to follow the default policy, which is described as exponential, or explicitly set the interval to exponential. So, four seconds becomes sixteen on the second retry, and 256 seconds on the second interval. The first time you get a 429, you stop for a period of time – say four seconds – and then each time you retry, you square the interval. The strategies vary but the default answer is an exponential interval. Automatic Retriesīecause a server may respond with a 429, most programs know to retry the request. The problem is that, when you’re dealing with so many different users and so many variables, it’s sometimes difficult to predict when the server will be willing or able to service a request. “Hey, I should be able to take care of that request for you after seven seconds” tells the program when it should retry the request – and here’s the kicker – expect that it will be able to be serviced. Sometimes these responses are kinder and will indicate when the person should come back. One of their defenses is to respond with “I’m too busy right now, come back later.” This comes back as the code HTTP status code 429. Web servers are under constant assault from well-intended users, the code written by bumbling idiot developers (of which occasionally I am one), and malicious people. Request Throttlingīefore we can explain how a flow gets throttled to death, we first must understand a bit about throttling. In this post, we’ll talk about what happens every day and then what strategies you can use to protect your workflow. Though it’s possible to protect your workflow instances from being throttled to death, it isn’t as easy as it might seem.

SharePoint’s just the accomplice in this crazy dance that will get your workflows killed. Except it’s not SharePoint’s throttling that is the true killer. We’re here to mourn the death of many a workflow instance at the hands of SharePoint’s HTTP throttling.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed